April 30, 2026 at 5:31 PM

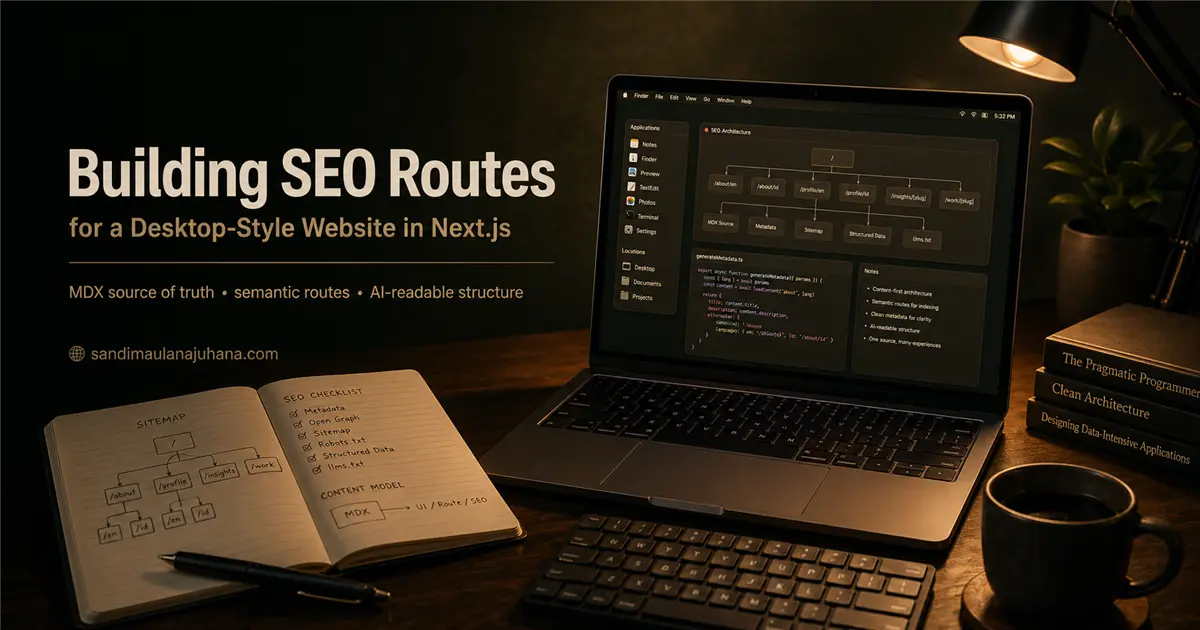

Building SEO Routes for a Desktop-Style Website in Next.js

How I structure MDX content, full-page routes, metadata, sitemap, robots.txt, and AI-readable files for a desktop-inspired portfolio in Next.js.

Notes on Turning an App-Like Website into Crawlable Pages

In a previous note, I wrote about why a desktop-style website can still have strong SEO.

This article is the more technical continuation.

Not as a strict tutorial, but as a practical look at how I usually think about the architecture behind it: how a website that feels like a desktop can still expose clean routes, readable content, metadata, sitemap entries, structured data, and AI-readable text.

The interesting part is not only the visual layer.

The interesting part is the boundary.

Where does the desktop experience end? Where does the semantic web begin? And how do both layers use the same content without duplicating everything?

That is the main problem I want to solve.

1. Start from Content, Not from the Window

When building a desktop-style website, it is tempting to start from the window component.

AboutWindow. ReadmeWindow. PreviewWindow. NotesWindow. FinderWindow.

This is natural because the UI is the most visible part.

But if the content only lives inside those components, the architecture becomes fragile. The website may look good, but the content becomes harder to reuse.

For SEO, this is usually the wrong direction.

I prefer starting from content files.

For example:

public/

about/

page.mdx

documents/

sandi.mdx/

page.mdx

desktop/

items/

pharmart/

page.mdx

pharmart.webp

notes/

insights/

desktop-style-website-with-powerful-seo/

page.mdxThe important idea is simple:

The content file owns the meaning. The window owns the experience. The route owns the public URL.

This separation keeps the system easier to scale.

When I add a new project, I do not want to edit five different UI components just to make it visible in Finder, Preview, SEO pages, and AI-readable files. I want the content file to carry the core information, then let each layer render it according to its responsibility.

2. Use MDX Frontmatter as the Shared Contract

MDX becomes useful because it gives the content a predictable contract.

A file can hold metadata and body content in one place:

---

title: Pharmart

description: A healthcare commerce project built with modern web architecture.

language: en

category: Work

source: pharmart.webp

static: pharmart.webp

---

## Overview

Pharmart is a digital healthcare commerce project...The frontmatter does not need to be complicated.

In fact, I prefer it to stay boring.

Fields like title, description, language, category, source, and static are enough for many cases. Later, when the content needs more capability, fields like speech, order, or tags can be added carefully.

The key is consistency.

If every desktop item has a predictable frontmatter shape, the loader can build many experiences from it:

- desktop icon data.

- Finder row data.

- Preview window data.

- SEO route metadata.

- sitemap entry.

- structured data.

- AI-readable summary.

This is where frontmatter becomes more than metadata.

It becomes a small content contract.

3. Write Content Loaders That Are Scoped and Predictable

In Next.js, reading local MDX content usually means using fs, path, and gray-matter.

A simple loader may look like this:

import fs from "node:fs/promises";

import path from "node:path";

import matter from "gray-matter";

const itemsDirectory = path.join(

process.cwd(),

"public",

"desktop",

"items",

);

export async function loadDesktopItems() {

const entries = await fs.readdir(itemsDirectory, { withFileTypes: true });

const items = await Promise.all(

entries

.filter((entry) => entry.isDirectory())

.map(async (entry) => {

const itemDirectory = path.join(itemsDirectory, entry.name);

const sourcePath = path.join(itemDirectory, "page.mdx");

const raw = await fs.readFile(sourcePath, "utf8");

const { data, content } = matter(raw);

return {

slug: entry.name,

title: String(data.title ?? entry.name),

description: String(data.description ?? ""),

content,

};

}),

);

return items;

}There are two details I care about here.

First, the path is scoped.

The loader reads from:

path.join(process.cwd(), "public", "desktop", "items")not from a fully dynamic project-wide path.

Second, the loader normalizes data.

The UI should not need to guess whether title exists, whether description is missing, or whether the slug should come from frontmatter or folder name. That work belongs closer to the content loader.

This is not only a cleanliness issue.

It also reduces SEO mistakes.

Bad metadata often comes from unclear content contracts.

4. Render the Same Content in Desktop Windows

Once the loader returns predictable data, the desktop UI can stay focused on experience.

For example, a Preview window should not need to know how MDX files are stored on disk. It only needs an item:

type PreviewItem = {

title: string;

description: string;

imageSrc?: string;

content: string;

};Then the desktop can open it:

<PreviewWindow

item={{

title: project.title,

description: project.description,

imageSrc: project.staticSrc,

content: project.content,

}}

/>The same idea applies to TextEdit-style documents.

The window should render the document, not own the document.

This sounds small, but it changes how the codebase grows. New windows can be added without changing the content format. New content can be added without rewriting the window logic.

That is the kind of separation I like in a desktop-style website.

The UI remains playful.

The data flow remains boring.

Usually, that is a good combination.

5. Create Full-Page Routes for Important Content

The desktop experience is great for exploration.

But for SEO, important content still needs routes.

In Next.js App Router, a localized About route can look like this:

src/

app/

about/

page.tsx

[lang]/

page.tsxThe default route can redirect to a preferred language:

import { redirect } from "next/navigation";

export default function AboutIndexPage() {

redirect("/about/en");

}Then the language route can statically render the page:

import { notFound } from "next/navigation";

import { loadAboutContent } from "@/lib/content/about";

import { AboutFullPage } from "@/features/seo/components/AboutFullPage";

const languages = ["en", "id"] as const;

type AboutLanguage = (typeof languages)[number];

export function generateStaticParams() {

return languages.map((lang) => ({ lang }));

}

export default async function AboutPage({

params,

}: {

params: Promise<{ lang: string }>;

}) {

const { lang } = await params;

if (!languages.includes(lang as AboutLanguage)) {

notFound();

}

const aboutContent = await loadAboutContent();

return (

<AboutFullPage

aboutContent={aboutContent}

lang={lang as AboutLanguage}

/>

);

}This pattern gives the content a normal public URL:

/about/en

/about/idThe desktop About window can still exist.

But now search engines, external links, and AI crawlers also have a clean route to read.

This is the hybrid model I prefer.

Window for experience. Route for indexing. Same content behind both.

6. Generate Metadata from the Same Content

A full-page route should not only render content.

It should also describe itself.

That is where generateMetadata becomes important:

import type { Metadata } from "next";

import { absoluteUrl, site } from "@/lib/seo/site";

import { loadAboutContent } from "@/lib/content/about";

export async function generateMetadata({

params,

}: {

params: Promise<{ lang: string }>;

}): Promise<Metadata> {

const { lang } = await params;

const aboutContent = await loadAboutContent();

const content = lang === "id" ? aboutContent.idContent : aboutContent.en;

return {

title: content.title,

description: content.description,

alternates: {

canonical: `/about/${lang}`,

languages: {

en: "/about/en",

id: "/about/id",

},

},

openGraph: {

type: "profile",

url: absoluteUrl(`/about/${lang}`),

siteName: site.name,

title: content.title,

description: content.description,

},

};

}This is one of the parts I consider important.

Metadata should not be a separate hardcoded story.

If the content changes, the metadata should follow.

Of course, not everything needs to be automatic. Sometimes metadata needs editorial adjustment. But as a default pattern, the content source should remain close to the metadata source.

This reduces drift.

And drift is one of the quiet problems in SEO.

The page says one thing. The title says another. The Open Graph description says something old. The sitemap points somewhere else.

Small inconsistencies like that make a website feel less maintained, both for humans and machines.

7. Keep Sitemap Explicit Enough to Be Trusted

For content-rich websites, sitemap generation should not be an afterthought.

A simple sitemap can combine static routes and dynamic content:

import type { MetadataRoute } from "next";

import { absoluteUrl } from "@/lib/seo/site";

import { loadNotes } from "@/lib/content/notes";

import { loadDesktopItems } from "@/lib/content/desktopItems";

export default async function sitemap(): Promise<MetadataRoute.Sitemap> {

const [notes, desktopItems] = await Promise.all([

loadNotes(),

loadDesktopItems(),

]);

return [

{

url: absoluteUrl("/"),

priority: 1,

},

{

url: absoluteUrl("/about/en"),

priority: 0.9,

},

{

url: absoluteUrl("/about/id"),

priority: 0.9,

},

...notes.map((note) => ({

url: absoluteUrl(`/insights/${note.slug}`),

lastModified: note.date,

priority: 0.75,

})),

...desktopItems.map((item) => ({

url: absoluteUrl(`/work/${item.slug}`),

lastModified: item.modifiedAt,

priority: 0.8,

})),

];

}The exact priority numbers are not the most important part.

The important part is that important content is discoverable.

For a desktop-style website, this matters because not all content is naturally reached through normal page navigation. Some content may be opened through a dock, a desktop icon, a window, or Spotlight.

That is fine for users.

But crawlers still benefit from a clean sitemap.

The sitemap becomes the formal map of the website.

8. Robots.txt Should Be Clear, Not Clever

I prefer a simple robots.txt.

If the intention is to allow indexing, say it clearly:

import type { MetadataRoute } from "next";

import { absoluteUrl } from "@/lib/seo/site";

const AI_AND_SEARCH_AGENTS = [

"Googlebot",

"Bingbot",

"Applebot",

"GPTBot",

"ChatGPT-User",

"OAI-SearchBot",

"ClaudeBot",

"Claude-User",

"PerplexityBot",

] as const;

export default function robots(): MetadataRoute.Robots {

return {

rules: [

{

userAgent: "*",

allow: "/",

},

{

userAgent: [...AI_AND_SEARCH_AGENTS],

allow: "/",

},

],

sitemap: absoluteUrl("/sitemap.xml"),

};

}Technically, the wildcard rule already allows everyone.

The explicit agent list is more of a documentation layer. It makes the intent visible: this website is open to search engines and AI crawlers that respect robots rules.

I do not like making this overly clever.

If a website wants to be discovered, the robots file should not feel like a puzzle.

9. Add Structured Data for Stronger Meaning

Structured data helps describe what the page represents.

For a personal portfolio, this can include Person, WebSite, AboutPage, Article, or CreativeWork.

A simple JSON-LD block can look like this:

import Script from "next/script";

type JsonLdProps = {

data: Record<string, unknown>;

};

export function JsonLd({ data }: JsonLdProps) {

return (

<Script

id="structured-data"

type="application/ld+json"

strategy="beforeInteractive"

dangerouslySetInnerHTML={{

__html: JSON.stringify(data),

}}

/>

);

}Then a page can pass structured data:

<JsonLd

data={{

"@context": "https://schema.org",

"@type": "AboutPage",

name: "About Sandi Maulana Juhana",

url: "https://sandimaulanajuhana.com/about/en",

about: {

"@type": "Person",

name: "Sandi Maulana Juhana",

jobTitle: "Full-Stack Engineer",

},

}}

/>Structured data does not replace good content.

It clarifies it.

That distinction matters.

If the page is thin, structured data will not magically make it strong. But if the page already has clear content, structured data helps machines understand its role more confidently.

10. Make AI-Readable Files from the Same Content

For AI readability, I like the idea of maintaining llms.txt and llms-full.txt.

They are not replacements for sitemap or structured data.

They are more like a readable guide.

For example:

# Sandi Maulana Juhana

Personal website and desktop-style portfolio of Sandi Maulana Juhana.

## Key Pages

- Home: /

- About English: /about/en

- About Indonesian: /about/id

- Profile English: /profile/en

- Profile Indonesian: /profile/id

## Topics

- Full-stack engineering

- Next.js architecture

- Desktop-style web interfaces

- SEO and AI-readable contentThe important thing is not only having the file.

The important thing is keeping it aligned with the real content.

If a new route is added, the AI-readable map should be updated. If the About content changes, the summary should not stay old forever.

Again, this is about reducing drift.

Good SEO is often less dramatic than people think.

It is mostly about keeping public meaning consistent across many small surfaces.

11. Avoid Duplicating the Content Itself

There is one trap in this kind of architecture:

Duplicating content too much.

It can happen slowly.

About text inside AboutWindow. Another about text inside /about/en. Another summary inside schema. Another one inside llms.txt. Another one inside Open Graph metadata.

At first, this feels harmless.

But over time, one version changes and the others do not.

That is why I prefer this flow:

MDX content

-> desktop window

-> full-page route

-> metadata

-> sitemap

-> structured data

-> llms.txtNot every layer needs the full content.

But every layer should be derived from, or at least aligned with, the same source of truth.

This is what makes the system feel clean.

The website can have many faces without having many conflicting stories.

Closing Reflection

A desktop-style website can easily become a collection of impressive UI pieces.

That is not necessarily bad.

But if the goal is long-term discoverability, the architecture needs to go deeper than the visual metaphor.

The window is not the content. The dock is not the navigation strategy. The animation is not the information architecture. The desktop is not a replacement for the web.

It is an experience layer.

The web still needs routes. Search still needs metadata. AI still needs readable context. Users still need links they can open, share, and revisit.

For me, the strongest version of a desktop-style website is not the one that hides the web completely.

It is the one that understands the web well enough to bend its interface without breaking its fundamentals.

That is the balance I keep aiming for.

Playful on the surface.

Structured underneath.