May 8, 2026 at 11:00 AM

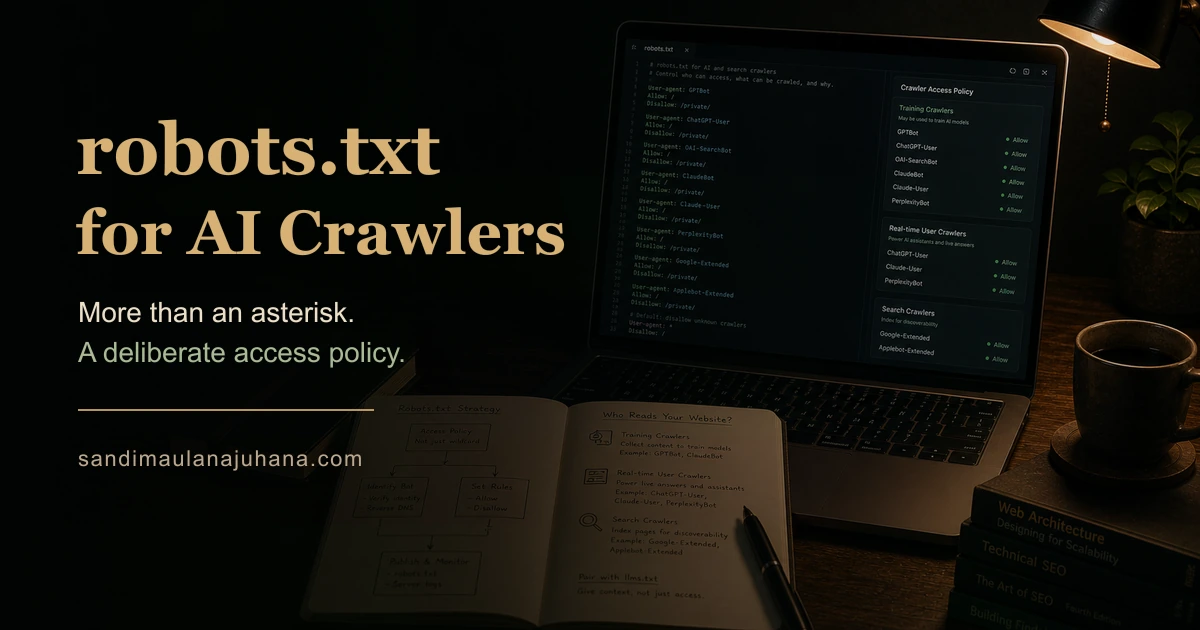

robots.txt for AI Crawlers: More Than Just an Asterisk

Most developers write userAgent "*" and call it done. But AI crawlers are not monolithic — OpenAI alone has four distinct agents, each with a different job. On understanding who is actually reading your site, and being intentional about it.

Notes on Who Is Actually Reading Your Site

Most robots.txt files look like this:

User-agent: *

Allow: /Two lines. Done. Every bot gets access to everything.

That was a reasonable default when the only readers that mattered were Googlebot and a handful of other search crawlers. It is a less precise answer now.

AI systems crawl the web for different reasons — training datasets, real-time browsing, search indexing, ad targeting. Each reason has its own user agent. And each user agent can be controlled independently.

Most sites do not make that distinction. They either allow everything or block everything, without knowing what they are actually allowing or blocking.

1. One Asterisk Does Not Cover Everything

The * wildcard in robots.txt matches any user agent not explicitly listed.

In practice, this usually means everything is allowed, which is fine if you do not care about the distinction. But there is a difference between a bot that crawls your site to include it in a search result and a bot that crawls your site to use the content as training data for a language model.

Both can be controlled. The control is user-agent-level, not URL-level.

User-agent: GPTBot

Disallow: /One directive. OpenAI's training crawler is now blocked. ChatGPT's real-time browsing still works — that is a different user agent.

This is the distinction most developers miss. You can block one OpenAI agent and allow another. They are not the same thing.

2. OpenAI Has Four User Agents

This is the part that surprises most people.

"GPTBot", // Training data crawler — indexes your site for model training

"ChatGPT-User", // Real-time browsing — when a user asks ChatGPT to read a URL

"OAI-SearchBot", // Search index — powers ChatGPT search results

"OAI-AdsBot", // Ad targeting — used for advertising productsFour agents. Four different jobs. Four independent directives in robots.txt.

A developer who writes Disallow: / for GPTBot has blocked OpenAI's training crawler. But a user who pastes their URL into ChatGPT and asks it to summarize the page — that request goes through ChatGPT-User, which is still allowed.

The practical question is: which of these do you actually want to control?

For a portfolio or a blog, blocking training crawlers while allowing real-time browsing is a reasonable position. The content is yours. Whether it ends up in a training dataset is a different question from whether ChatGPT can read it on demand.

3. Claude Also Has Four

Anthropic follows the same pattern.

"ClaudeBot", // Training and general crawling

"Claude-User", // Real-time — when a user asks Claude to read a URL

"Claude-SearchBot", // Search index — Claude's web search capability

"anthropic-ai", // General Anthropic crawlerThe naming conventions differ between companies, but the structure is the same: one company, multiple agents, each with a specific function.

This is not accidental. It gives site owners meaningful control. You can allow Anthropic's search crawler while blocking their training crawler. Or allow everything. Or block everything. The granularity is there if you want to use it.

4. The Others Worth Knowing

Beyond OpenAI and Anthropic:

"PerplexityBot", // Perplexity's main crawler

"Perplexity-User", // Real-time browsing within Perplexity

"Google-Extended", // Google's AI training — separate from Googlebot

"Applebot-Extended",// Apple Intelligence — different from standard Applebot

"YouBot", // You.com AI searchGoogle-Extended is the one most developers overlook. Googlebot and Google-Extended are different user agents with different purposes. Googlebot indexes your site for search results. Google-Extended crawls your site for AI training and products like Gemini.

You can opt out of Google-Extended without affecting your Google search ranking. The two are explicitly separated.

Applebot-Extended is Apple's equivalent — introduced for Apple Intelligence. The standard Applebot still handles Safari's reading list and Spotlight suggestions. The Extended variant is for AI features specifically.

5. The Implementation in Next.js

Next.js generates robots.txt via a typed route handler in src/app/robots.ts.

const AI_AND_SEARCH_AGENTS = [

"Googlebot",

"Bingbot",

"BingPreview",

"Applebot",

"Applebot-Extended",

"GPTBot",

"ChatGPT-User",

"OAI-SearchBot",

"OAI-AdsBot",

"ClaudeBot",

"Claude-User",

"Claude-SearchBot",

"anthropic-ai",

"PerplexityBot",

"Perplexity-User",

"Google-Extended",

"YouBot",

] as const;

export default function robots(): MetadataRoute.Robots {

return {

rules: [

{ userAgent: "*", allow: "/" },

{ userAgent: [...AI_AND_SEARCH_AGENTS], allow: "/" },

],

sitemap: absoluteUrl("/sitemap.xml"),

};

}The MetadataRoute.Robots type from Next.js handles the serialization — generating a correctly formatted robots.txt response at build time.

Two rules. Both allow everything. But the explicit listing of AI agents matters: it documents intent, and it makes future changes trivial. Blocking a specific agent is one line change, not a regex hunt through a plain text file.

The as const assertion on the array preserves the literal types — useful if these agent names are referenced elsewhere in the codebase for consistency.

6. Allowing vs Blocking: The Real Question

The actual decision is not a technical one.

Blocking AI crawlers entirely is straightforward. But it is worth being clear about what you are blocking:

Training crawlers (GPTBot, ClaudeBot, Google-Extended) — your content may be used in future model training. Blocking these means opting out of that.

Real-time crawlers (ChatGPT-User, Claude-User) — when a user asks an AI to read your specific URL. Blocking these means your content cannot be summarized or analyzed on demand, even when a human explicitly requests it.

Search crawlers (OAI-SearchBot, Claude-SearchBot) — powers AI search results. Blocking these means your site does not appear in AI-powered search.

For most sites — especially portfolios and blogs — the practical answer is: allow everything. The content is public. The goal is to be found and understood, by humans and AI systems alike.

The point of explicit listing is not necessarily to block, but to be intentional. To know what each agent does before deciding.

7. Verifying That a Bot Is Who It Claims to Be

Anyone can send a request with User-Agent: GPTBot.

A user agent string is just text. A scraper that wants to bypass your Disallow directives can set its user agent to anything — including the exact string of a well-behaved crawler.

The distinction matters: robots.txt directives are only as meaningful as the crawler's willingness to respect them. Legitimate AI crawlers from OpenAI, Anthropic, and Google do respect robots.txt — they have stated policies and reputations at stake. But a scraper impersonating GPTBot will not.

The actual verification mechanism is reverse DNS lookup.

When a request arrives claiming to be GPTBot, you can verify it by checking whether the request's IP address resolves back to a domain owned by OpenAI. OpenAI documents their verified IP ranges and the expected reverse DNS pattern (crawl-XXX-XXX-XXX-XXX.gpt.openai.com). Google documents the same for Googlebot. Anthropic publishes theirs for ClaudeBot.

# How to verify a bot claiming to be GPTBot:

# 1. Get the IP address from the request

# 2. Run: nslookup <IP-address>

# 3. Check if the returned hostname ends in .openai.com

# 4. Then: nslookup <that-hostname>

# 5. Confirm it resolves back to the original IPThis two-step check — forward-confirmed reverse DNS — is the standard verification method used by Googlebot and adopted by other major crawlers.

robots.txt controls what honest crawlers do. Verification is how you distinguish the honest ones from the ones that merely claim to be.

8. robots.txt and llms.txt Are Not the Same Job

Both files deal with how machines read your site. They solve different problems.

robots.txt controls access — which agents are allowed to crawl which paths. It is enforced at the request level, before anything is read.

llms.txt controls understanding — what the AI finds after it is allowed in. It is a curated document that tells the model who you are and what your site contains.

A site with only robots.txt gives AI crawlers permission to enter, then leaves them to wander and construct their own representation from whatever they find.

A site with only llms.txt has organized its content for AI consumption, but has no policy on which agents are allowed to access it.

Both together form a coherent strategy: deliberate access control paired with deliberate content presentation. The first answers "who can come in." The second answers "here is what you will find."

For a site that wants to be understood accurately by AI systems — not just indexed, but genuinely understood — neither file alone is sufficient.

Closing Reflection

A robots.txt file is a signal, not a wall.

Bots that intend to respect it will. Bots that do not will not.

But for the ones that do — and most major AI companies explicitly commit to respecting robots.txt — the signal matters. It is a machine-readable statement of intent: here is what I am willing to share, and with whom.

Writing * is not wrong. It just leaves that statement unspoken.

Understanding what each user agent actually does turns a two-line file into a deliberate policy. That is worth the ten minutes it takes to get right.