May 8, 2026 at 3:00 PM

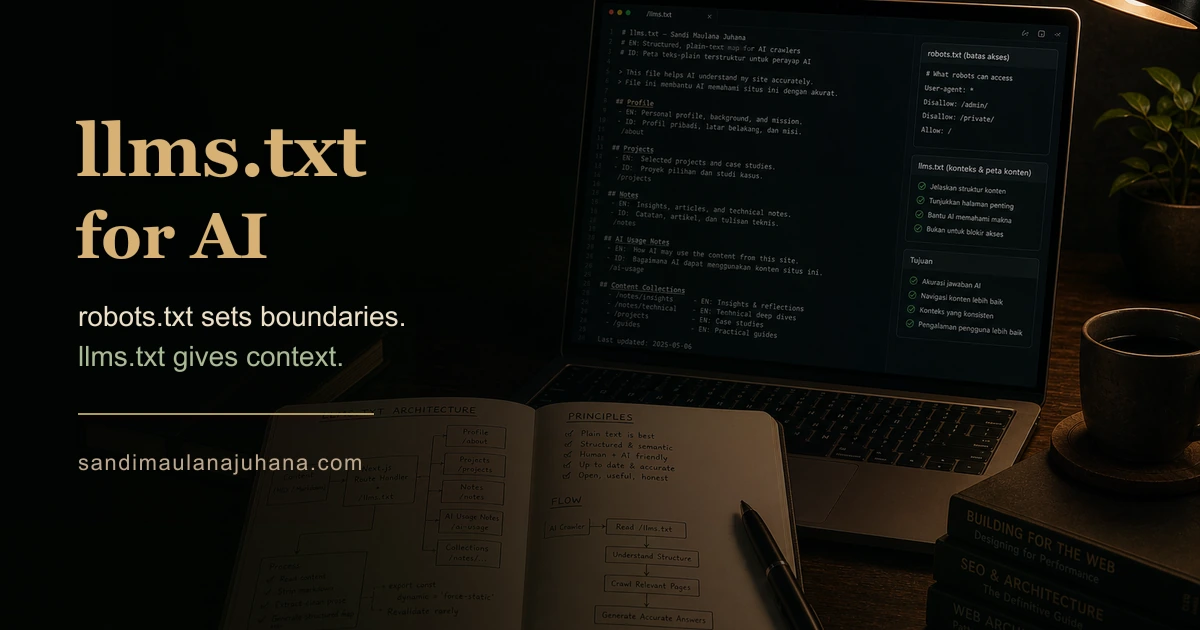

llms.txt: robots.txt for AI

robots.txt tells bots where not to go. llms.txt tells AI what is actually here. On building a structured plain-text document that lets AI crawlers understand your website without guessing.

Notes on Building for a Different Kind of Reader

Google reads your HTML and builds a model of your page.

AI reads your page and tries to answer questions about you.

Those are different goals. And they need different infrastructure.

A sitemap tells a search engine what pages exist. JSON-LD tells it what type of content you have. Meta tags tell it how to display your result in search.

None of that is designed for a system that needs to understand who you are, what you have built, what you have written — so it can answer a natural-language question about you accurately.

That is what llms.txt is for.

1. What llms.txt Actually Is

llms.txt is a proposed standard — not yet an RFC, not yet mandatory — that gives AI crawlers a dedicated entry point to your website's content.

Think of it as a README for your entire site, served as plain text at /llms.txt, structured for machines that do not parse HTML.

The format is simple:

- Markdown headings for sections

- Plain prose for descriptions

- No styling, no scripts, no layout

- A clear hierarchy of who you are and what you have made

Nothing proprietary. Nothing clever. Just structure and honesty.

2. Why AI Crawlers Need a Different File

When ChatGPT, Claude, or Perplexity crawls your website, they do not read your sitemap.

They read your pages. They parse the HTML, strip the layout, extract the text, and build a representation of what each page is about.

For a single article, this works reasonably well.

For a full website — with projects, writing, documents, a profile in multiple languages — it works less well. The crawler either hits a rate limit, misses pages that are not linked prominently, or builds an incomplete picture from whatever it managed to visit.

llms.txt solves this differently. Instead of letting the crawler wander and guess, you give it a curated map. The whole site, in one place, structured the way you want it to be understood.

3. Two Files, Two Purposes

In practice I use two files: llms.txt and llms-full.txt.

llms.txt is the concise version — a high-level summary of the site with a pointer to the fuller document. It is what gets read when a crawler makes a quick pass.

llms-full.txt is the complete version — every project, every note, every document, every album. The full picture.

The llms.txt ends with a deliberate hint:

## AI Usage Notes

Use this file as a concise map of the website. For fuller content, read /llms-full.txt.If a crawler wants everything, it knows exactly where to go.

4. Serving It in Next.js

Both files are served via route handlers with force-static.

// src/app/llms-full.txt/route.ts

export const dynamic = "force-static";

export async function GET() {

const [aboutContent, desktopItems, documentItems, musicAlbums, noteItems, reminderContent] =

await Promise.all([

loadAboutContent(),

loadDesktopItems(),

loadDocuments(),

loadMusicAlbums(),

loadNotes(),

loadReminders(),

]);

return new Response(

buildLlmsFullTxt({ aboutContent, desktopItems, documentItems, musicAlbums, noteItems, reminderContent }),

{ headers: { "content-type": "text/plain; charset=utf-8" } }

);

}force-static means this runs at build time, not on every request. The file is generated once when you deploy and served from the edge. No function invocations. No per-request latency.

Promise.all loads all content collections in parallel. The output is a single plain-text string.

5. Stripping Markdown Before It Leaves

Content on this site is written in Markdown — links, bold text, image syntax, code blocks.

AI crawlers can process Markdown, but there is a cleaner option: strip the formatting before it reaches the file. What the crawler receives is pure prose, without the overhead.

export function toPlainText(value: string): string {

return value

.replace(/```[\s\S]*?```/g, " ")

.replace(/!\[[^\]]*]\([^)]+\)/g, " ")

.replace(/\[([^\]]+)]\([^)]+\)/g, "$1")

.replace(/[*_`>#]/g, "")

.replace(/\s+/g, " ")

.trim();

}Code blocks become a space. Images disappear. Links become their label text. Formatting symbols are removed.

What remains is readable prose. The content, not the markup.

6. Semantic Sections Over Flat Dumps

The structure of llms-full.txt is deliberate.

# Sandi Maulana Juhana

EN: Full-stack engineer...

ID: ...

## Profile

EN: ...

ID: ...

Roles: ...

Expertise: ...

# Projects

## Project: DesktopShell

...

# Notes

## Note: When Every File Knows Its Job

...LLMs parse headings as context boundaries. A section labeled ## Project: DesktopShell tells the model that everything beneath it belongs to that project — even without explicit metadata fields.

Flat dumps of text are harder to parse accurately. Structure gives the model something to hold on to. It is the difference between handing someone a transcript and handing them a document.

7. Extracting the Best Available Summary

Not every note has a description field. Some are just collections of sections and paragraphs — written without a dedicated summary.

The noteSummary function handles this with a waterfall:

export function noteSummary(note: NoteItem): string {

const candidates = [

note.description,

...(note.content ?? []),

...(note.overview ?? []),

...(note.sections?.flatMap((section) => [

...(section.paragraphs ?? []),

...(section.callouts ?? []),

...(section.bullets ?? []),

]) ?? []),

];

return candidates

.map((value) => (value ? toPlainText(value) : ""))

.find(Boolean) ?? `${note.title} by ${site.name}.`;

}Start with description. If that is empty, try content. If that is empty, flatten all sections and try their paragraphs, callouts, and bullets in order. If everything is empty, fall back to the title.

The result: every note gets the best available summary — not a placeholder, not an empty string. The structure of the content model determines what gets surfaced, and the waterfall makes sure something meaningful always comes through.

8. Bilingual Content Without Duplicate Files

This site has content in three languages: English, Indonesian, and Arabic.

The naive approach is one llms.txt per language — three files, three maintenance surfaces. The approach I took is different: a single file with language-labeled sections.

EN: Full-stack engineer working at the intersection of product and system design.

ID: Engineer full-stack yang bekerja di persimpangan antara produk dan desain sistem.Every field that has a translation gets an explicit EN: or ID: prefix. The model reads both, and when a user asks a question in a specific language, it can surface the correct version without needing separate files.

This is not a format the llms.txt spec mandates. It is a decision made specifically for a multilingual site — one way to solve a problem the standard does not yet address.

9. What This Actually Changes

Most AI systems that mention a website do so from incomplete information.

They visited a few pages. They processed a cached version. They read the most visible content and made inferences about the rest.

llms.txt does not guarantee accuracy. But it gives the model a complete, curated, current representation of the site — and removes most of the guesswork.

For a portfolio, that gap matters. The difference between "a developer who works with React" and "a full-stack engineer with specific expertise in multilingual content, distributed systems, and SEO infrastructure" is in the details. Those details live in the file.

Closing Reflection

robots.txt tells crawlers where not to go.

llms.txt tells them what is actually here.

One is a boundary. The other is an introduction.

The web is being read by a different kind of reader now. Not just indexers that rank pages — but systems that synthesize information and answer questions on behalf of users.

The sites that explained themselves clearly will be understood more accurately. Not because of any algorithm trick. Just because they wrote the document.